Where does the bug appear (feature/product)?

Cursor IDE

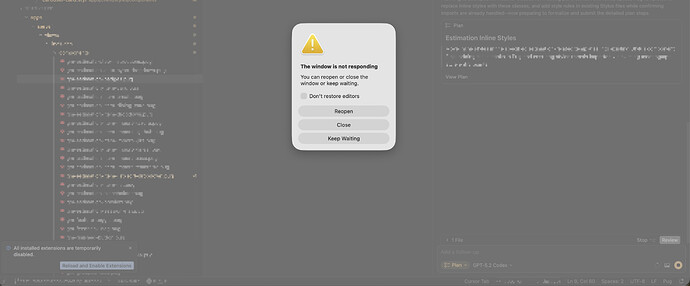

Describe the Bug

Just doing plan mode, already retry multiple time all error (crash)

Steps to Reproduce

Just normal plan mode

Expected Behavior

it should working perfect

Screenshots / Screen Recordings

Operating System

MacOS

Version Information

Version: 2.4.21

VSCode Version: 1.105.1

Commit: dc8361355d709f306d5159635a677a571b277bc0

Date: 2026-01-22T16:57:59.675Z

Build Type: Stable

Release Track: Default

Electron: 39.2.7

Chromium: 142.0.7444.235

Node.js: 22.21.1

V8: 14.2.231.21-electron.0

OS: Darwin arm64 25.2.0

For AI issues: which model did you use?

All model error

Does this stop you from using Cursor

Yes - Cursor is unusable